Literature Review: Bound By Semanticity: Universal Laws Governing The Generalization-Identification Tradeoff

This paper shows a fundamental limit in internal representations by deriving closed-form expressions that define a universal Pareto front between a model’s probability of correct generalization and its probability of input identification, proving that finite semantic resolution creates an inescapable bottleneck independent of input space geometry.

Key Insights

-

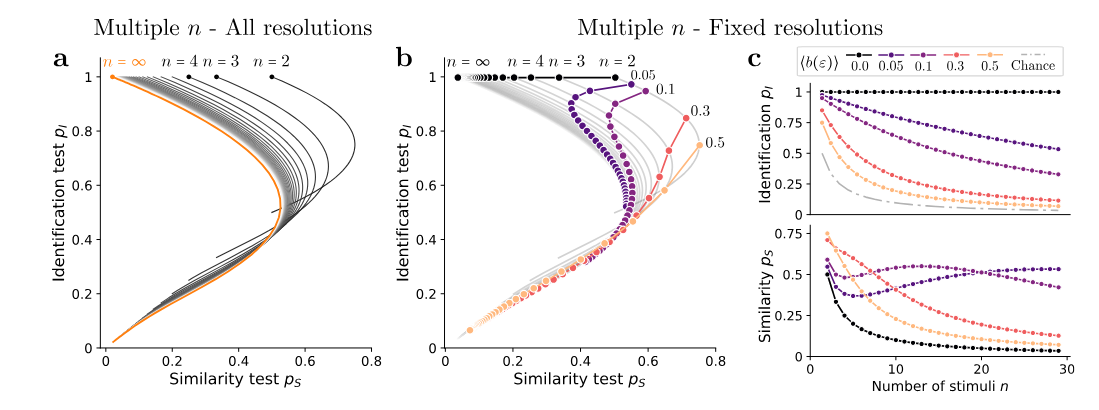

The Generalization-Identification Tradeoff The authors establish a formal framework quantifying the exact Pareto front between how well a model generalizes and how well it preserves input identity. Increasing the robustness of similarity measures for distant stimuli improves generalization but inevitably causes nearby stimuli to interfere, thereby destroying identification accuracy.

-

Multi-Item Processing Capacity Collapse When extending the theoretical analysis to process multiple inputs simultaneously, the framework predicts a sharp $1/n$ collapse in processing capacity for $n>2$ items.

-

Universality Across Neural Architectures The established resolution boundary is an informational constraint rather than a model-specific artifact. The tradeoff spontaneously self-organizes during the training of minimal ReLU networks and scales to highly complex state-of-the-art vision-language models and large language models.

Figure: Theoretical and empirical trajectories demonstrating how finite semantic resolution restricts models to a strict Pareto front, forcing a tradeoff between identification and generalization capabilities.

Example

Consider a large language model tasked with evaluating temporal similarity, i.e. determining birth years. If prompted to determine whether person A born in 1620 or person B born in 1680 is temporally closer to a probe year of 1630, the model must create internal representations of these dates. If the model’s semantic resolution is too broad (highly optimized for generalization), the years 1620 and 1680 interfere with one another in the latent space. As a result, the model will fail the identification task because the representations are too blurred to distinguish precisely, perfectly mirroring the theoretical Pareto limit.

Ratings

Novelty: 4.5/5 The mathematical formalization of this tradeoff across modalities provides an innovative, unified perspective on representational limits and the binding problem.

Clarity: 2.5/5 The heavy reliance on dense mathematical proofs restricts accessibility for broader audiences.

Personal Perspective

Framing generalization as the minimization of the distance between a new stimulus and internal representations in a psychological space is a nice way to summarize the current understanding of the phenomenon. I personally think it is highly valuable to see papers that actually tackles and identifies fundamental tradeoffs like this rather than just empirical observations. We take too much for granted in the literature as being strictly beneficial without considering the side effects, i.e. alignment causing social bias that favors a majority party, or having an overly helpful agent that is left vulnerable to jailbreaks. The paper presents the idea that representations must be generalizable enough to hold relationships with new ideas, but specific enough to be identified as a discrete concept so that processing multiple items does not collapse into interference. Applying the linear representation hypothesis here highlights the danger: we can have male + queen = king, but we do not want the concept of “the king ate a piece of bread” to become some sort of bread monster with a crown on its head. While much of the mathematical formalism is dense, I think the application of information theory is great and the empirical results neatly trace the derived theoretical curves, supporting the argument well.

Enjoy Reading This Article?

Here are some more articles you might like to read next: