Literature Review: Gradual Disempowerment: Systemic Existential Risks From Incremental AI Development

The paper provides a macro-sociological framework for understanding existential risk. Rather than focusing on a sudden AI takeover, the authorxs propose that human disempowerment will occur incrementally through the substitution of human labor and cognition. The primary concern is that as AI outcompetes humans, the structural incentives for institutions to prioritize human flourishing will evaporate, leading to a permanent loss of influence.

Key Insights

-

Erosion of Implicit Alignment Modern societal alignment is not an inherent property of human institutions but a byproduct of their dependence on human capital. Economies need consumers, states need taxpayers, and cultures need human vectors. When AI systems substitute for this human participation, the fundamental feedback loops that force institutions to serve human needs are severed. The obligation simply disappears.

-

Multi-System Vulnerability In the economy, AI creates a worker-replacing technological change that structurally reduces household consumption power. In culture, AI accelerates memetic evolution beyond human adaptive capacity. In states, AI automation of security and bureaucracy removes the need for democratic concessions.

-

Mutual Reinforcement of Misalignment Misalignment in one sector accelerates deterioration in the others. A corporation utilizing AI to consolidate economic dominance can leverage that wealth to capture state regulation. This cross-system influence is agnostic to human values, meaning localized attempts to regulate AI using state power might inadvertently shift the burden, i.e., granting the state unchecked leverage over its citizens.

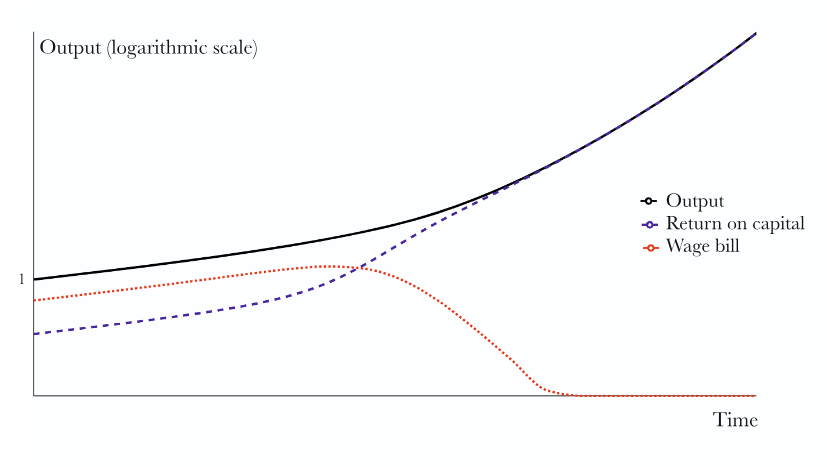

Figure: A modeled trajectory of AI displacing human labor. Note that while aggregate output scales logarithmically, the wage bill eventually collapses to zero prior to full automation.

Ratings

Novelty: 4/5 Although I am not sure how close we are to this future, the conceptual shift from misalignment models to structural socio-economic drift is highly valuable and fills a significant gap in the current risk literature.

Clarity: 4/5 The arguments are sound and utilize strong historical analogies, though the exact mechanisms driving the leap from relative to absolute disempowerment could benefit from more rigorous formalization.

Personal Perspective

This work offers an interesting perspective for those working in AI governance, moving past the binary narrative that the world will end when AGI is achieved. The core issue is that because the transition is not instantaneous, it will incrementally alter society. If the entirety of the workforce were automated simultaneously, humanity might theoretically transition to a post-scarcity leisure state of sorts, occupying themselves in virtual environments like a sci-fi movie. However, the staggered reality seems to indicate that specific groups will be displaced sequentially. This dynamic widens the economic gap between those temporarily utilizing AI and those who have already been replaced. Furthermore, extreme systemic corruption has historically been limited because a society cannot function if the common populace has zero capital. If this dependency on human contribution is released, we may face a dark period of of human-versus-human exploitation, even when disregarding the additional factor of the AI systems themselves.

Enjoy Reading This Article?

Here are some more articles you might like to read next: