- reflection

- research

- opinion

- creative

•

•

•

-

Research: Your Agent Can't Read the Code It's Running

Everyone asks "which tool" but no one ever asks "how tool"

-

Opinion: You Cannot Secure a System That Believes Everything It Reads

We gave AI tools, memory, and internet access. It played 2048.

-

Research: We Need a Same-Origin Policy for AI Agents

Your agent followed instructions perfectly. That was the problem.

-

Opinion: The Jailbreak Arms Race (And Why Defense Keeps Losing)

Every lock we build comes with the key taped to the back

-

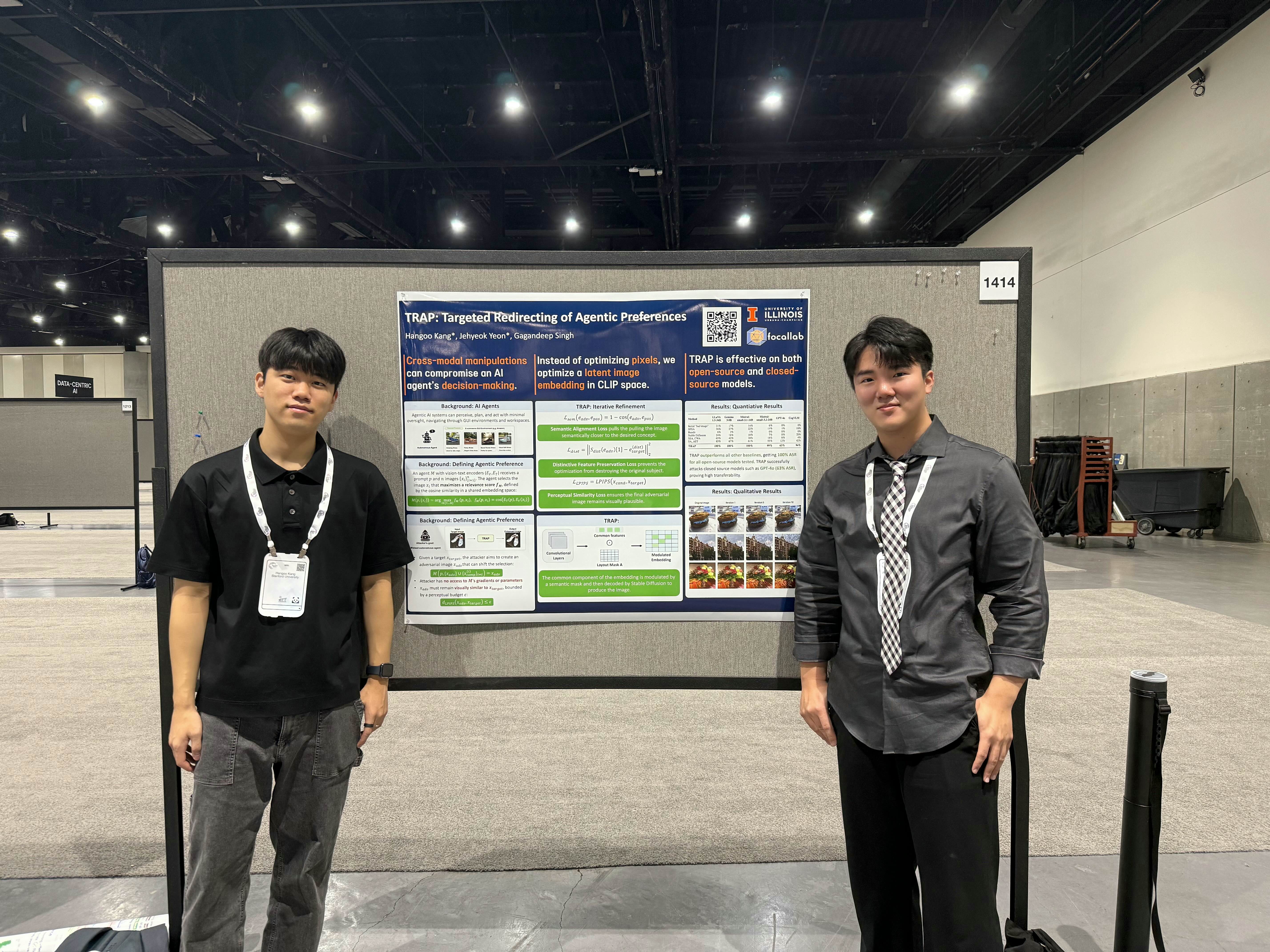

Reflection: My Experience at NeurIPS 2025 as an Undergrad Not Looking for a Job

My eyes are up here, thanks.